hcsa

Veterans-

Posts

11 -

Joined

-

Last visited

-

Days Won

1

hcsa last won the day on May 10 2021

hcsa had the most liked content!

Reputation

12 FledglingRecent Profile Visitors

The recent visitors block is disabled and is not being shown to other users.

-

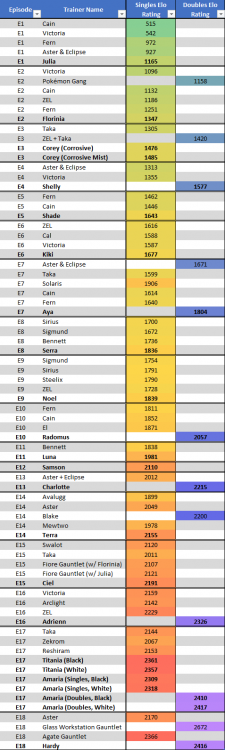

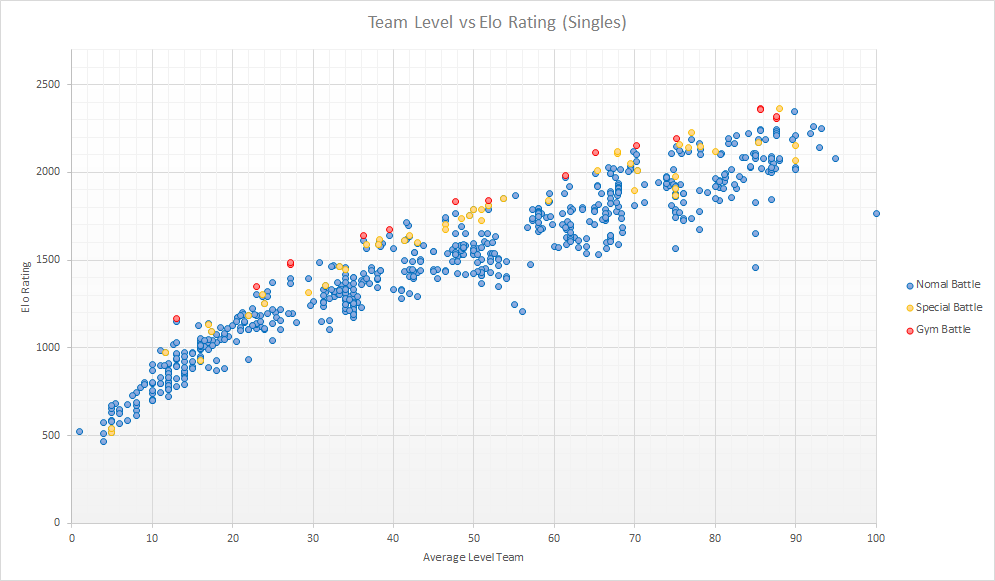

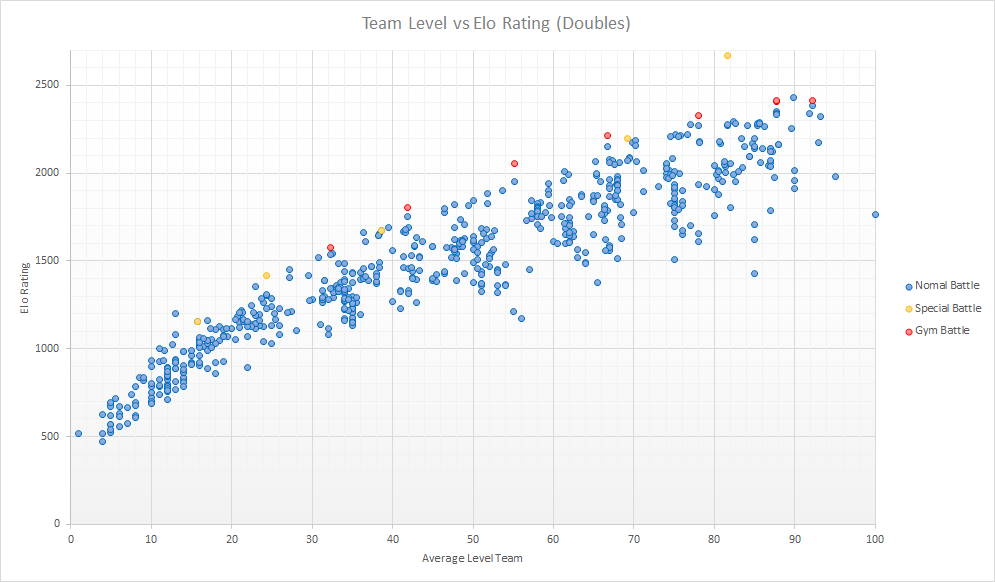

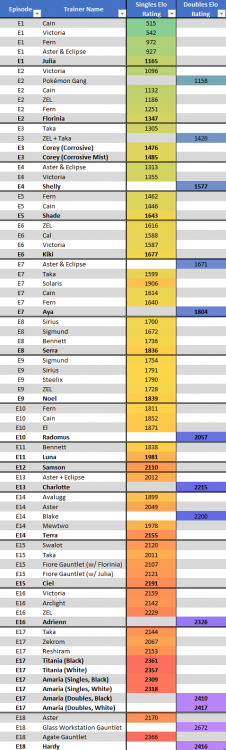

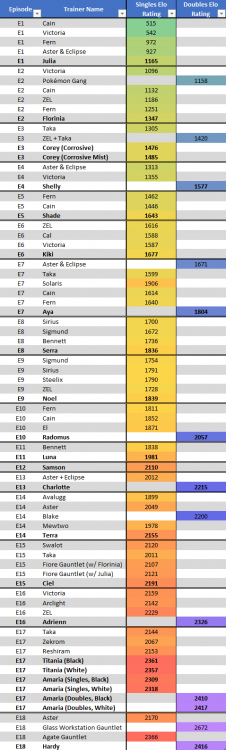

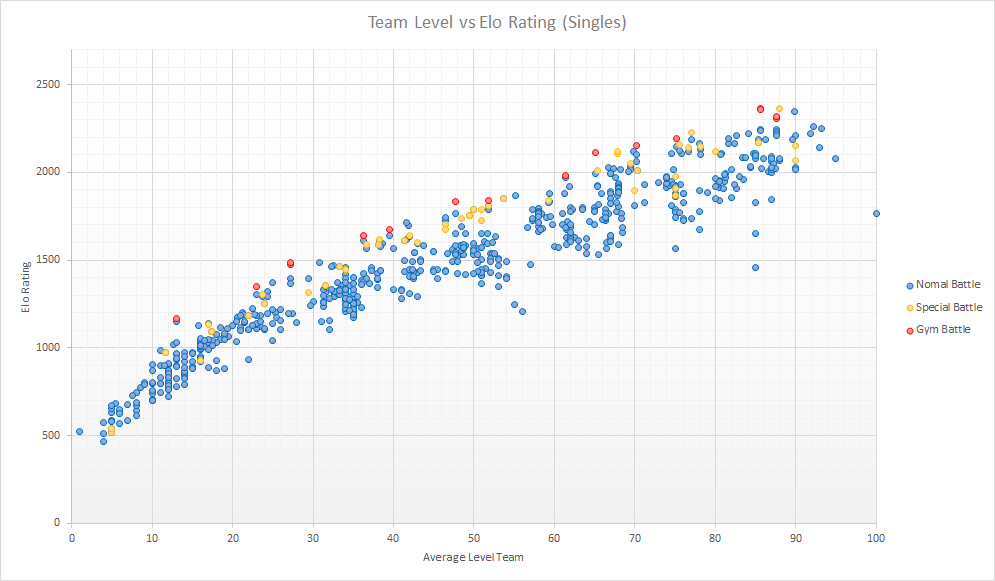

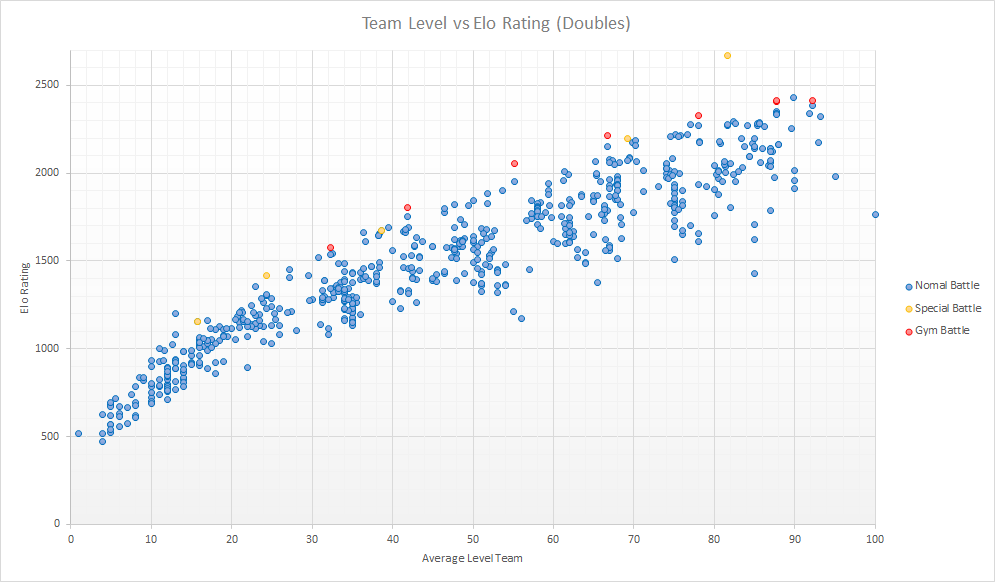

A couple months ago, I watched a video by pimanrules where the NPCs from Pokémon Red/Blue fought each other in an all-out tournament and were assiged an Elo rating based on their performance: the higher the rating, the better you are. Go check the video out, it's very well produced and with super clean animations to boot! I thought it was a really cool concept and wanted to do the same for Reborn. Problem is, it requires making the AI fight each other, which seems hard to do, until I figured out that writing 2 words does most of it. So if for some reason you woke up today and said "man, I really want to see Steven fight Cynthia in a doubles Reborn setting", well that's an extremely specific wish, but I can give it to you. And for a fight more related to Reborn (SPOILERS) So with this I rated all the NPCs from Reborn. I ran two tournaments: one for singles and another for doubles. Battles were fought in a neutral field with no weather. Megas, Z-moves and PULSEs are fair game, as well as any items the NPCs are coded to use. On top of that, I added "special battles" like gyms, rivals and PULSE battles, which are fought as intended in-game: with field effects/permanent weather/battles fought back to back/other crazy stuff. Each is placed in either the singles or doubles ladder according to the following rule: if the human player would use a single pokémon, then it's a singles battle; otherwise, it's a doubles battle. The results are shown in the Excel spreadsheet, and obviously it has massive spoilers. In fact, I can't really say more about results without spoiling the game. Since I'm guessing it's the special trainers that people really want to see, here you go (SPOILERS): But spreadsheets are boring, so I plotted the average level of each NPC and their Elo rating. I also added the special and gym battles and gave them a different color, so you can see how math proves that boss battles are in fact harder than regular battles. Those plots are shown here and they do technically contain spoilers but like, if you don't try too hard to stare at the red dots' levels or something like that, it's fine. Singles: Doubles: Now some of you might be interested in the details and some of you might not. I'm gonna take a page out of cass' writing book and do this in a Q&A style, so you can just scroll down and see the answers you care about. If you want to know something else, feel free to ask! Q: How many battles were played? A: All together, I recorded the results of ~730K single and ~650K double battles. If we say each one takes on average 15 seconds (all sped up, no animations and with yeeted text boxes), that's almost 240 days worth of battles. Do I get a reward for the most playtime? Q: This took 8 months? A: It would've taken longer because I do need my PC to do actual useful work sometimes. But I had 15 instances running when doing this. It took 2 months. Q: How were the battles decided? A: Everyone fought everyone at least twice (except especial battles, which never fought other special battles). Then I did a pre-ranking, and selected matchups based on how wrong the predicted score was from the actual score -- basically if X beats Y but was lower rated, I did more matches to confirm the result. Then I did another pre-ranking and played matches for NPCs with similar ratings. Q: How do you make NPCs fight each other? A: There's a function (pbOwnedByPlayer?, in the script PokeBattle_Battle) that asks if a pokémon is -- wouldn't you guess it -- controlled by the player character. If you write inside 'return false', that makes it always answer 'no'. So the game thinks the player controls no pokémon, and makes the AI decide everything. Q: That's all it takes? A: Do not underestimate a programmer's ability to completely break your code with 2 words. Anyway, that's the hard part (which I lucked out in beaing so easy, otherwise probably wouldn't have done this) but there were some issues: the AI does not use items when it's playing in the player's side, the pokémons on the player's side can gain exp and level up and learn moves, AI decisions specific to the trainer class do not happen, the game does not like doubles where the opposite side has only one pokémon, AI may chose differently depending on the side it's playing. I fixed the first four and the most glaring cases of the last one, but eventually resorted to having every match followed by another match with changed sides. Q: What is that yellow dot in the doubles plot way above the others? A: You sweet innocent child. You'll know when you fight it. Q: What is Elo? A: Elo is a rating system used to compute the skill of players relative to each other. It's named after Mr. Elo, and if you think that's a joke it's not, that would be a very suspicious joke. You may have heard it from Showdown or chess. Q: How does it work? A: Each player is given a rating, and in theory the outcome of a match between two players is determined by the difference in their ratings. The exact formula is below, but beware the math, it might bite. To have an idea, - No difference means a 50% expected score - A 20-point difference means a 61% expected score - A 50-point difference means a 76% expected score - A 100-point difference means a 91% expected score Small note: the scale is compressed by 4x relative to the usual scale; a 100-point difference here should translate to a 400-point difference in Showdown or chess. This is because otherwse the ratings would get crazy stupid. (In the math above, the 100 is usually a 400) Q: 'Expected score' means winning odds? A: Yes if there are no ties. So a 60% expected score can mean you win 60% of the time and lose the other 40%, or you win 20% of matches and the other 80% are ties. Q: Wait, there are ties in Pokémon? A: There are in Essentials. In cannon games stuff like killing both sides with Take Down nets a win for the user but Essentials counts it as a tie. But only 0.3% of battles recorded ended in ties, so you can pretty much ignore them. Q: If X has 20 more Elo points than Y, does it mean X will win 61% of the time (ignoring ties)? A: God no. The formula works in theory and we live in practice. Q: Then where do the ratings come from? A: From trying to make all the predictions for all the matchups as correct as possible. But it's very easy to find matchups where the NPC with higher Elo loses more often, and the rankings are not perfect by any means. So if you want to argue that X is better than Y because it has 5 more rating points, that's dumb. A 50-point difference is more reasonable. Q: Ok so if X has 50 more Elo points than Y, does it mean X is better than Y? A: Well, first off your definition of "better" must include "considering how well the AI uses it". Doesn't matter how good a team is stats wise, if the AI is screwing it up then it will perform badly. Q: Fine, if X has 50 more Elo points than Y and the AI is the best, does it mean X is better than Y? A: Your definition of "better" also needs to include "in the meta". Q: Why does meta matter? A: Let me give you a stupid example. Say you want to give an Elo rating to 10 players in rock-paper-scissors, so you have them do repeated matches against each other. At the end, you find out that one player, say X, beats all the other players 50% of the time and ties the other 50%. So you think "ah, this X guy must be good at this, I guess it's expected from someone with a cool name such as X". But then you go and find out X always plays rock while the other ones play either scissors or rock depending on a coin flip. So X is really just playing the meta, and since Elo is computed from wins and losses it looks like X is the best player ever, but anyone can beat X if they want. What's happening is that X's strategy is rewarded in the meta, even though it's super bad in general. Q: Ok, but can something like that happen for these NPCs specifically? A: Imagine that, for some random plot reason, there are 2 mono Steel teams and 4 mono Water teams around the same level. Well then, if X has a team of the same level with 3 Rock-types, they may complain it's unfair to them. You can argue they had it coming, but they will be especially punished because of it. Usually the meta is large and dynamic, players learn over time and the top strategies have the least exploitable weaknesses, so that gives a consensual definition of "good player". But these NPCs are static, so that's not happening. Q: Then are these ratings meaningless? A: No, it's just a system like any other, it has its strengths and weaknesses. And it is generally good: if I look at the ratings of the special battles, I generally agree with them. You can accept it blindly or completely disagree, but at the end of the day don't take it too seriously. Q: How are ratings computed? A: First off, you need to have battle results from NPCs. You start by giving everyone a base rating of 1500 and then do successive updates to those ratings. For each pair of NPCs you take the theoretical expected score, compare the actual score from the matches played and update both players' ratings so that the theoretical score becomes closer to the expected score. You do this for all the matchups between NPCs, and repeat the whole process until updates to ratings are minimal. Q: Isn't that different from what's normally done? A: Usually Elo updates want to account for the evolution in the player's skill. If X wins 3 times against Y and then loses, then the rating update should be smaller than if X were to lose first and then win 3 times because you want to account more for the most recent performances. You do this by updating the ratings after each game. For 3 wins and one loss, you'd do 4 updates with expected scores 100%, 100%, 100% and 0%. The NPCs here are static, so this method doesn't make a lot of sense. I simply say that X has a 75% winning score against Y -- because, let's be honest, they do -- and whenever I want to update their ratings, I use that 75% value. When X and Y play again, the 75% value changes accordingly. Q: What is the value of the K-factor? A: For those that do not know, the K-factor is a fixed factor multiplied to every update, usually some number in the 20-30 range. You do this because otherwise the updates would be super small (less than 1 point per match). Since here I'm updating untill the updates themselves are small, I took K=1 because it makes convergence easier. It shouldn't make a difference in the final results. Q: Why did you do all of this? A: I have a thesis to write. Reborn Elo Ratings.xlsx

- 2 replies

-

- 12

-

-

-

Going to restart again. Same card: Chikorita template with Ace Trainer (M)

-

I'm going to restart again, so this time I'd like some opinions before going in:

-

Going to restart Same card (Chikorita template with Ace Trainer (M).

-

Just a question: when USUM (which will have new move tutors) is out and new moves become available to some Pokemon, will we be allowed to use those movesets?

-

Welp, enough lurking around. I'd like a Chikorita template w/ Ace Trainer (M). And I'll play using the name 'TinDog'.

- 104 replies

-

- join

- redemption

- (and 8 more)